Models are everywhere these days. New business models, large-language models, digital twins. They range from foundation models which take millions of dollars and PhD-level computer scientists to train and operate down to the CAD / STL models which hobbyists use to draw and 3D print toys and tools.

At the risk of stating the obvious, it turns out that the physical world and the universe itself are rather complex. Who knew? Literally all of humanity, since forever.

I assert that modeling is the fundamental way that human brains simplify reality for useful purposes, allowing us to approximate well enough that we can solve difficult problems. So what’s in a model?

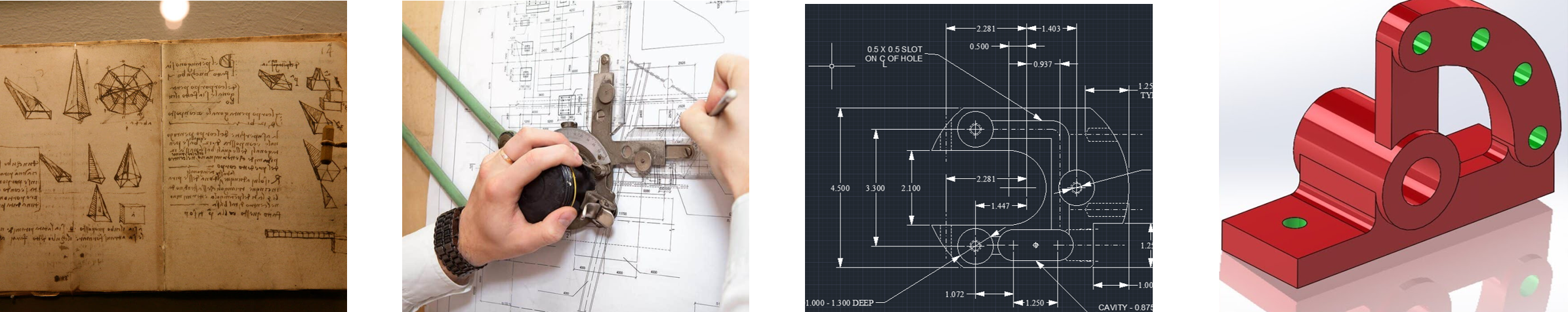

Throughout this series, I’ll be drawing upon my experience as a design engineer working in 3D modeling software. At least for me, entering through the door of trying to represent real objects, machines, and systems with computer code and equations helped build physical intuition that I now apply to more vague and squishy concepts like data models and digital twins.

Models are used everywhere but are especially common in engineering, science, and manufacturing–so let’s start there.

All models are wrong, but some are useful. ~ George EP Box

Most of us have probably built a miniature model of a bridge at some point as kids (or adults).

Using toothpicks, popsicle sticks, glue, and creativity we explored the relationships between shape, structure, strength, and optimization. Constraints and criteria like what materials we could use, how light it needed to be, or how broad a span it needed to cross led each of us to our own design. Then we watched them be tested to failure, weights added one by one, until one candidate proved the victor: an evolutionary algorithm, played out across a single generation.

Those of us destined to become engineers may not have stopped there. Building a small prototype, iterating while it was cheap and easy, and interrogating with weight experiments let us validate our designs with greater confidence of the potential outcome. Stopping just shy of collapse let us strategically reinforce weak points.

But any amount of building and testing to failure is still a pretty expensive way to run experiments. This is where the crucial leap from physical models to equation-based numerical, mathematical, and computational modeling comes in.

It truly is a leap of logic to believe that written symbols on a page of paper or zeroes and ones moving across silicon can be trusted well enough to build bridges that trains will drive over or validate rockets that will launch people onto the moon. Yet that is exactly what models have let us do, by predicting behavior well enough for the task at hand.

Drawing Boxes Around Things – A brief history of modeling and design.

Since the dawn of time, humans have been drawing things to confer visual or spatial information without being forced to wave their hands around–very handy (pun intended). Modern advances in science, technology, and manufacturing would have been impossible without the formal standards of drafting which emerged during the industrial revolution. It took decades of intense international collaboration motivated by economic opportunity to refine this expert consensus of universally understood and unambiguous symbolic knowledge representation. The end result is that a design or print can be sent to three machine shops in three separate countries and, theoretically, they should all produce the exact same part.

I would describe drafting standards as one form of an information model. Good information models are semantic, interoperable, logical, interpretable, and generally help us “speak the same language.” Mathematics itself is, arguably, the ultimate information model… but I’ll leave that philosophical conversation to others better positioned to debate it.

The next breakthrough came once computers powerful enough to enable 2D drawing and eventually 3D modeling software were invented to transform those sketches into something digital. What’s interesting is that, even at the time of writing decades after the advent of three-dimensional (3D) modeling, sketches and 2D drawings are still in very common use.

Why? Because higher dimensional models are inherently more complex and expensive.

There are no shortcuts–the system of equations at their core is structured to only produce valid, solid, contiguous shapes. Constraining lines and building primitive forms into infinite shapes which are then further aligned and constrained into assemblies is a lot of work and the end result is fragile. Changing or losing just a single component file can “break” the whole setup or ruin hours of someone else’s work. Highly detailed resolution will make other design calculations (like thermal fluid flow) too computationally intense to be practical so you end up having to approximate and simplify anyway.

And after all that effort, a 2D drawing (PDF) is usually still required to confer basic information such as material, surface treatment, precise datums, and tolerances!

Design Intent and Model Complexity – Choose the boxes you draw wisely.

Design always involves tradeoffs. Without an objective load limit or distance to span (design space) our childhood bridge competition wouldn’t have made much sense. Gravity is a very strict judge, and adding material for strength also requires the bridge to carry that weight.

As technical people of all disciplines, we must increasingly invest our time and expertise into the process of choosing the right model(s) from the many powerful and sophisticated toolsets at our fingertips. Blindly throwing computational power at a problem has never been an efficient approach and using expensive, powerful models (such as LLMs) for deterministic, bounded tasks (like arithmetic) is both foolish and dangerous. How should an engineer or designer go about selecting which to use especially as the technology continues to advance so rapidly?

There are three main drivers of model cost and complexity: scope / scale, fidelity / detail, and purpose / trust. Here’s a list of questions to ask yourself the next time you find yourself choosing how to model something:

Scope & Scale – “Draw a box around it.”

- What am I trying to model and where are the boundaries?

- What system or behavior am I trying to understand more about?

- What are the system inputs and outputs not being modeled?

- Where should I place subsystem boundaries to keep it feasible?

Fidelity & Detail – “What’s in the box?”

- How much detail do I need to model the behavior of interest?

- Does my data go down to that level? Are there data that don’t?

- What is the computational burden of getting that precise?

- What should the frequency or refresh rate be?

Purpose & Trust – “Why is this box here?”

- What kind of future decisions am I going to make using this?

- How deeply or closely should I model the system to be confident?

- How can I validate that it is accurate (enough) to the task?

In summary, a simple and robust approach to modeling complex systems is summed up in a few key best practices:

- Always keep the scope as small as possible while still containing behavior and system of interest.

- Higher levels of detail can increase confidence, but it always increases complexity.

- Models should be validated with data but trying to predict things will always be difficult.

Thanks for reading! Come back for Model-Based Everything Part 2 where I’ll introduce systems- and design-thinking principles walking up the complexity curve through the lens of solving problems using modern engineering tools.